Have you ever built a beautiful Splunk dashboard that was not only aesthetically pleasing, but also incredibly insightful? If so, maybe others learned of its value and now want that data shared--even to users who may not have Splunk accounts. We had such a case in which the Chief Information Officer (CIO) wanted to add a particular Splunk panel to his intranet site for all employees to see. In this article we will recreate the scenario and show you how this can be accomplished. The two screenshots below show two possibilities of embedding Splunk panels into external sites.

|

| Figure 1: Example of a single panel embedded into a page outside of Splunk |

|

| Figure 2: Example of two panels embedded into a page outside of Splunk |

Problem

There are quite a few issues that we need to solve, such as:- Splunk does not make it easy to share panels and especially entire dashboards outside of their platform

- Some data may be sensitive in nature, so just remember the potential audience

- Fortunately, this sharing can be disabled if needed

- This solution needs to be long-term low maintenance which means no manual updates

- The new website must be able to reach the Splunk search head via HTTPS

Potential Solution

The potential solution we are going to show uses scheduled saved reports to share out a panel. Here are the steps below:

1) Generate your insightful panel using the proper search. Click Save As > Report

|

| Figure 3: Creating a report |

2) Select the content and time range selector

|

| Figure 4: Saving the report |

3) After saving the report, let's schedule it

|

| Figure 5: Schedule the report |

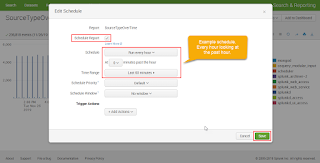

4) Specify the schedule - this example updates the report every hour with the last hour of data

|

| Figure 6: Schedule parameters |

5) Generate the embedded link by clicking Edit > Embed

|

| Figure 7: Generating the embedded link |

6) Copy the embedded iframe link into the external site in question (Example site shown in Demo Page Code section below)

|

| Figure 8: Embedded iframe link |

Conclusion

This is one possible solution that creates a low maintenance panel shared outside of Splunk. If you want to share an entire dashboard, this can be repeated for every panel in the dashboard. Just be cautious of the sensitivity of the data. If it is later determined that this data is no longer needed or should not be shared, it can be disabled. If you have a different method of sharing panels and especially entire dashboards, we would love to hear it. Feel free to post it in the comment section below and as always happy Splunking.

Demo Page Code

This is just a demo page that contains two embedded saved reports. The panel on the left is a single value number and the one on the right is a timechart. Just remember to replace the two locations of: "YOUR_EMBEDDED_LINK_HERE"

<HTML>

<HEAD>

<TITLE>This is an embedded demo</TITLE>

<style type="text/css">

<!--

td {

height: 300px;

vertical-align: center;

text-align: center;

}

iframe {

vertical-align: center;

text-align: center;

}

-->

</style>

</HEAD>

<BODY>

<center><h2>CIO's Corner</h2></center>

<table style="width:100%" border=1>

<tr>

<th>Total Count</th>

<th>Count over Time</th>

</tr>

<tr>

<td width="25%" height=300><iframe frameborder="0" scrolling="no" src="YOUR_EMBEDDED_LINK_HERE"></iframe></td>

<td width="75%" height=300><iframe height="100%" width="100%" frameborder="0" scrolling="no" src="YOUR_EMBEDDED_LINK_HERE"></iframe></td>

</tr>

</table>

</BODY>

</HTML>